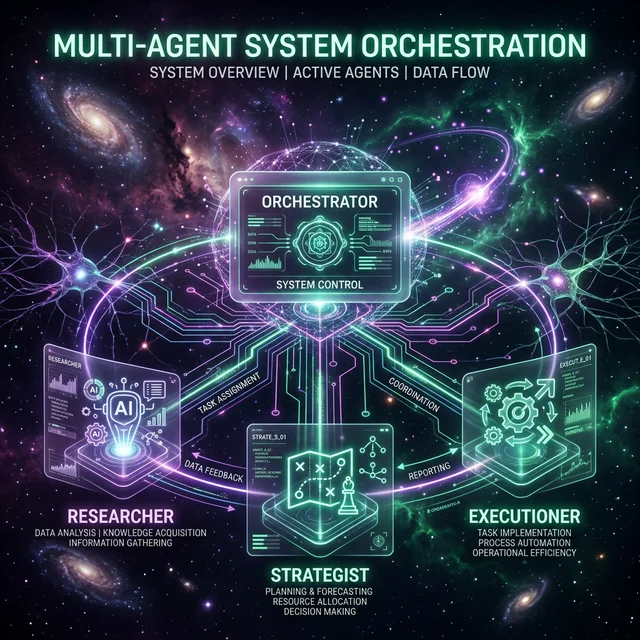

The Shift from Graphics to Pure Agency

Nvidia has officially ended the 'Graphics Processing Unit' era with the launch of the G-Core (Goal-Oriented Compute Core). While previous H-series and B-series chips focused on matrix multiplication for LLM training, G-Core is built specifically for inference-heavy autonomous loops. This architecture is the hardware answer to software breakthroughs like Anthropic Opus 4.

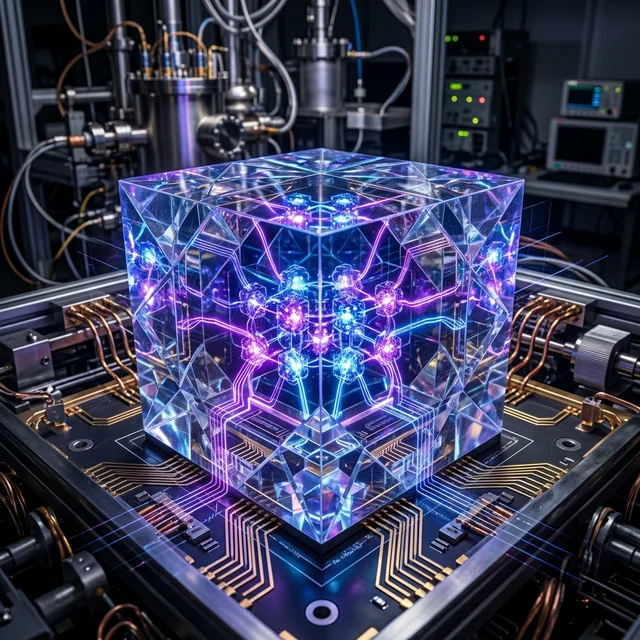

Hardware-Accelerated Recursive Logic

The breakthrough lies in the 'Recursive Logic Engine' (RLE) baked into every silicon die. Traditionally, AI agents like OpenAI O4-Continuity struggled with latency when branching into complex sub-goals. G-Core handles these branching decision trees directly in the L1 cache, reducing 'logic-hop' latency by an astounding 500%.

The Sovereignty of Edge Intelligence

By localized entire agentic workflows onto a single workstation, Nvidia is decentralizing the AI landscape. Companies no longer need massive server clusters to run high-fidelity autonomous project managers. 'We are putting the power of a data center into a single chip,' Jensen Huang stated during the keynote. This localized intelligence is expected to become the backbone of the 6G AI-native telecom systems currently being deployed globally.

Future-Proofing the Agentic Workforce

As Nvidia pivots to G-Core, the market for traditional general-purpose GPUs is expected to shift. The focus is now entirely on the 'compute per decision' rather than 'compute per token.' For developers building the next generation of autonomous corporations, the G-Core represents the definitive foundational hardware for the late 2020s.

Join FuturEdge to share your thoughts on this article.