Building the AI Engine of 2027

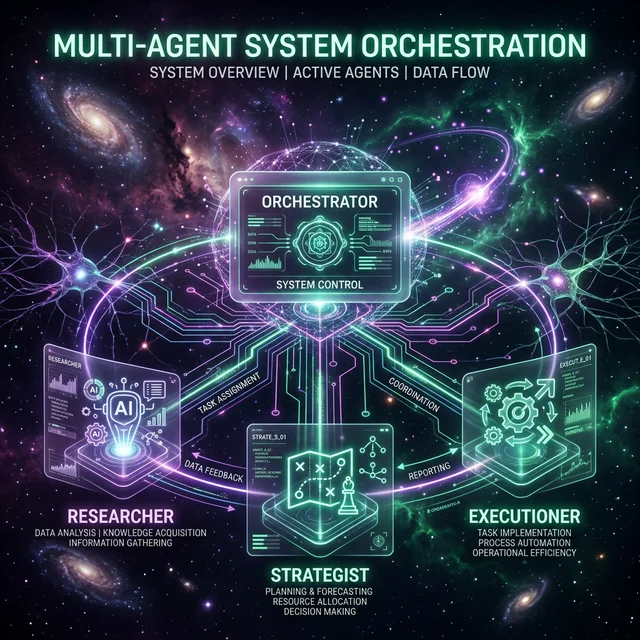

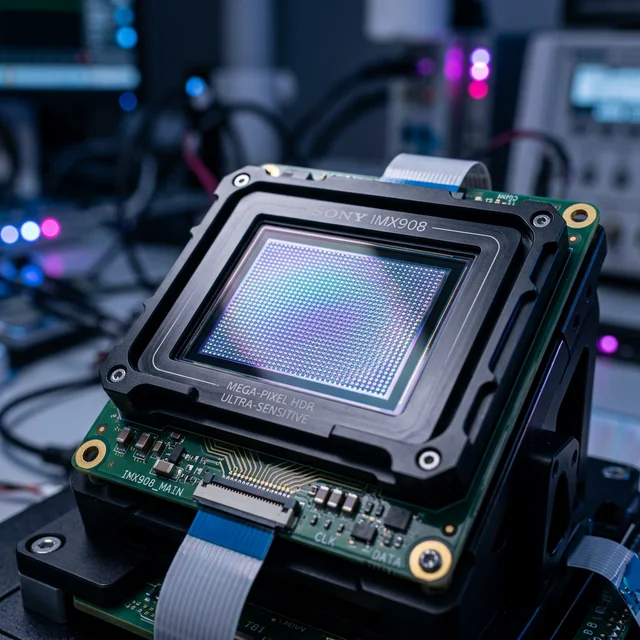

In a landmark joint announcement today, Samsung Electronics and AMD have solidified a strategic partnership that will define the next five years of AI hardware. Samsung has been named the primary supplier for HBM4 (High Bandwidth Memory) for AMD's future AI accelerators, ensuring that the next wave of 'Agentic' computing has the bandwidth it needs to process trillion-parameter models at the edge.

Technical Synergies

The alliance goes beyond simple supply. Engineers from both firms are co-developing advanced DRAM solutions specifically for AMD's EPYC server CPUs, aiming to reduce power consumption by 25% while increasing data throughput by 40%. This is a direct response to the soaring energy costs being faced by global data center operators. "We aren't just building chips; we are building the neural pathways for the world's second workforce," stated a senior AMD architect.

Market Impact

This partnership significantly strengthens AMD's position in the high-end AI market, providing a robust counter to Nvidia's current dominance. For Samsung, it secures a massive lead in the competitive HBM market. For FuturEdge readers, this is the clearest sign yet that the AI boom is moving into a phase of deep industrial integration, where the hardware itself is being specialized for autonomous task execution.

Join FuturEdge to share your thoughts on this article.